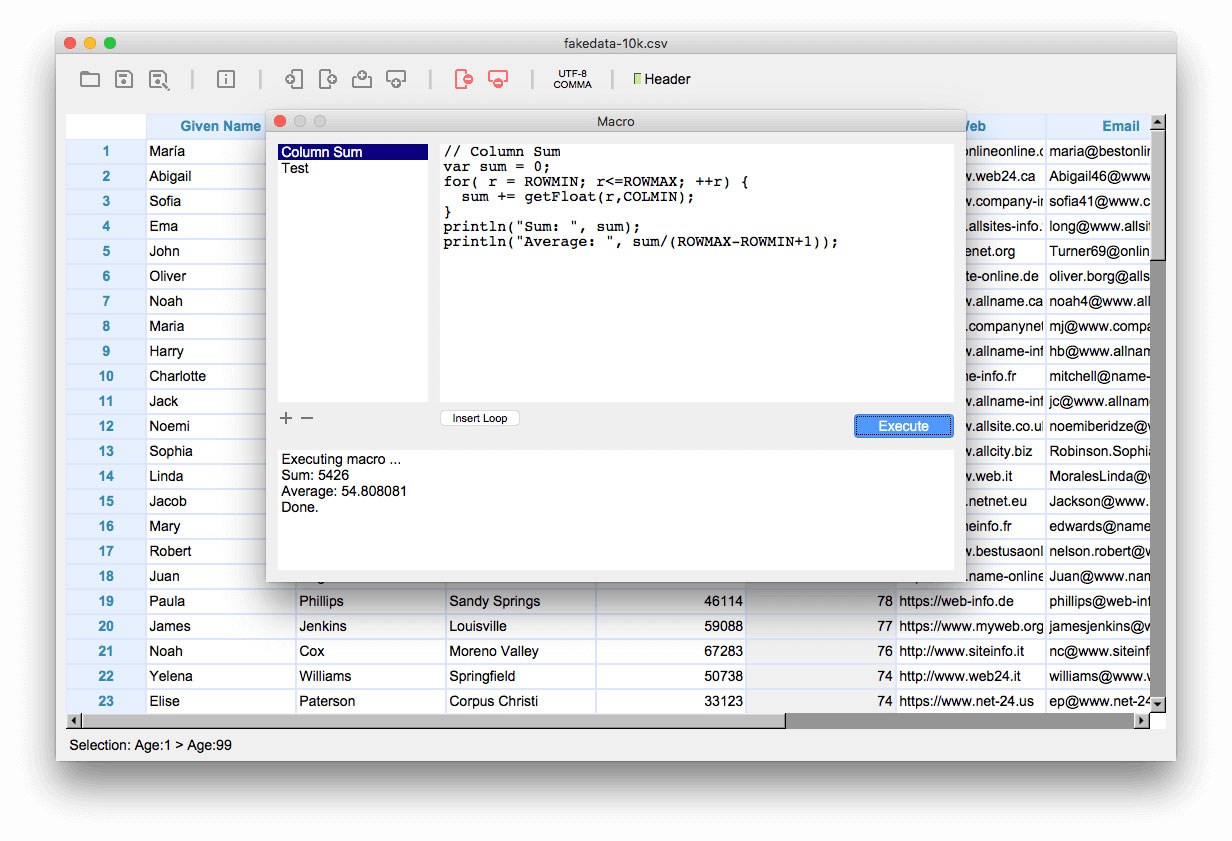

In India, the price ratios between labor / AWS costs is lower than in the US, sure, but it's still well worth it. It's much cheaper to give you a better computer than to pay you to wait around for a small one to finish. Do you really have to do this locally? Even if you aren't ready for distributed computing over clusters, you could just get an adequately sized VM instance. What's your overall goal here though? People are providing help with how to read it, but then what? You want to do a join/merge? You are gonna need more tricks to get through that.īut then what? Is the rest of your algorithm also chunkable? Are you going to have enough RAM left to process anything? And what about CPU performance? Is one little i7 enough? Do you plan on waiting hours or days for results? This might all be acceptable for your use case, sure, but we don't know that.Īt a certain point, if you want to use big data, you need big computer(s). Reports 1.4 GB RAM used, so the formula represents a rather accurate While this file is opened in Tablecruncher the Activity Monitor One of my test files is 524 MB large, contains 10 columns in 4.4 Where R is the number of rows, C the number of columns and F the file size in bytes. You can estimate the memory usage of your CSV file with this simple If you need to process everything at once and chunking really isnt an option you have only two options leftĪ csv file takes an enormous amount of memory in RAM, see this article for more information even if it is for another software it gives a good idea about the problem: See the Documentation of Pandas for further information.

If you do not need to process everything at once you can use chunks: reader = pd.read_csv('tmp.sv', sep='|', chunksize=4000)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed